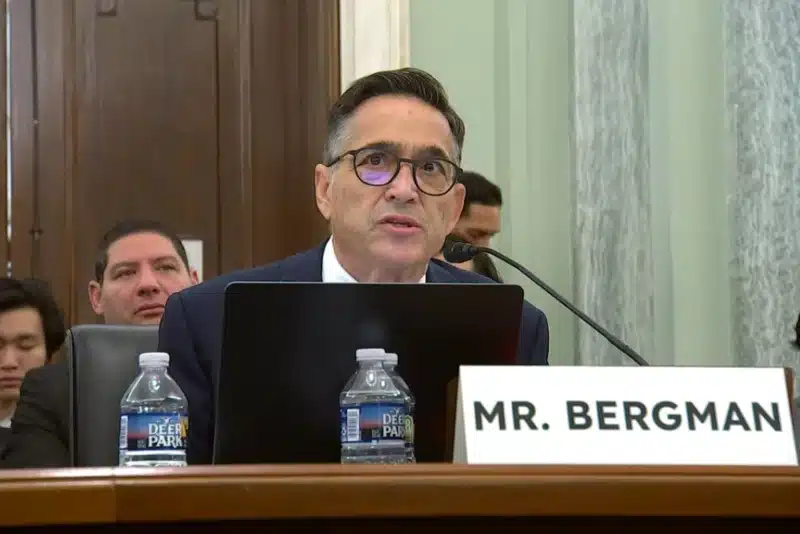

Social Media Victims Law Center (SMVLC) founding attorney Matthew P. Bergman met with British officials in early April to discuss how social media platforms can drive compulsive use and harm mental health, particularly among children and teenagers. Bergman recently helped secure a $6 million verdict in a Los Angeles social media lawsuit, where a jury found that platforms owned by Meta and Google contributed to a plaintiff’s serious mental health harm following prolonged social media use.

During the trip, Bergman met with Liz Kendall, the U.K. secretary of state for science, innovation and technology, and Jess Phillips, the government’s minister for safeguarding and violence against women and girls, among other officials. The meetings came as U.K. leaders weigh possible restrictions on social media use by children under 16 and centered on evidence that platform design features, including endless scrolling and algorithmic recommendations, can keep users online longer than intended. Bergman discussed the harmful and addictive effects of social media on children, highlighting internal company records showing executives understood the addictive nature of their products but continued to prioritize user engagement.

The trip illustrates how Bergman’s work through SMVLC is helping inform international conversations on child online safety and government policy. It also reflects the organization’s mission to hold social media companies legally accountable for the harm their products inflict on vulnerable users.